Etiqueta: Optics

-

Torch Lens Maker

https://victorpoughon.github.io/torchlensmaker – Librería en Python para el diseño de elementos ópticos utilizando modelos de optimización con PyTorch

-

You’re Probably Wrong About Rainbows

https://youtu.be/24GfgNtnjXc – Sobre la física detrás de los arcoíris (via @Veritasium)

-

Hearing with light, saying hello to coal (again), and more: THE WEEKLY RECAP (2022#29)

Lots of interesting stuff to share. A farewell to one of the most clever game studios out there, the latest advances in optoelectronics, another take on how humans will end up exterminating ourselves, and how scientists plan to improve weather forecast with the help of turtles are just a few of the links this week.…

-

Additive manufacturing for the development of optical/photonic systems and components

Earlier this year, I was at Photonics Europe 2022 in Strasbourg. I had a lot of fun with many talks, but there was one topic that really piqued my interest: additive manufacturing. I have always been interested in 3D printing, and I know from experience how useful they can be: printing mechanical pieces in the…

-

Sony’s Destiny, Leonardo’s telescope, and more: THE WEEKLY RECAP (2022#05)

Really packed week. Maybe some good job-related news in the near future? Crossing fingers. Also, more and more deadlines incoming, and winter is hitting quite hard during the past few days: Spring, come save us soon. A matter of Destiny? Who would have thought that, after creating the most iconic Xbox IP (Halo), Bungie would…

-

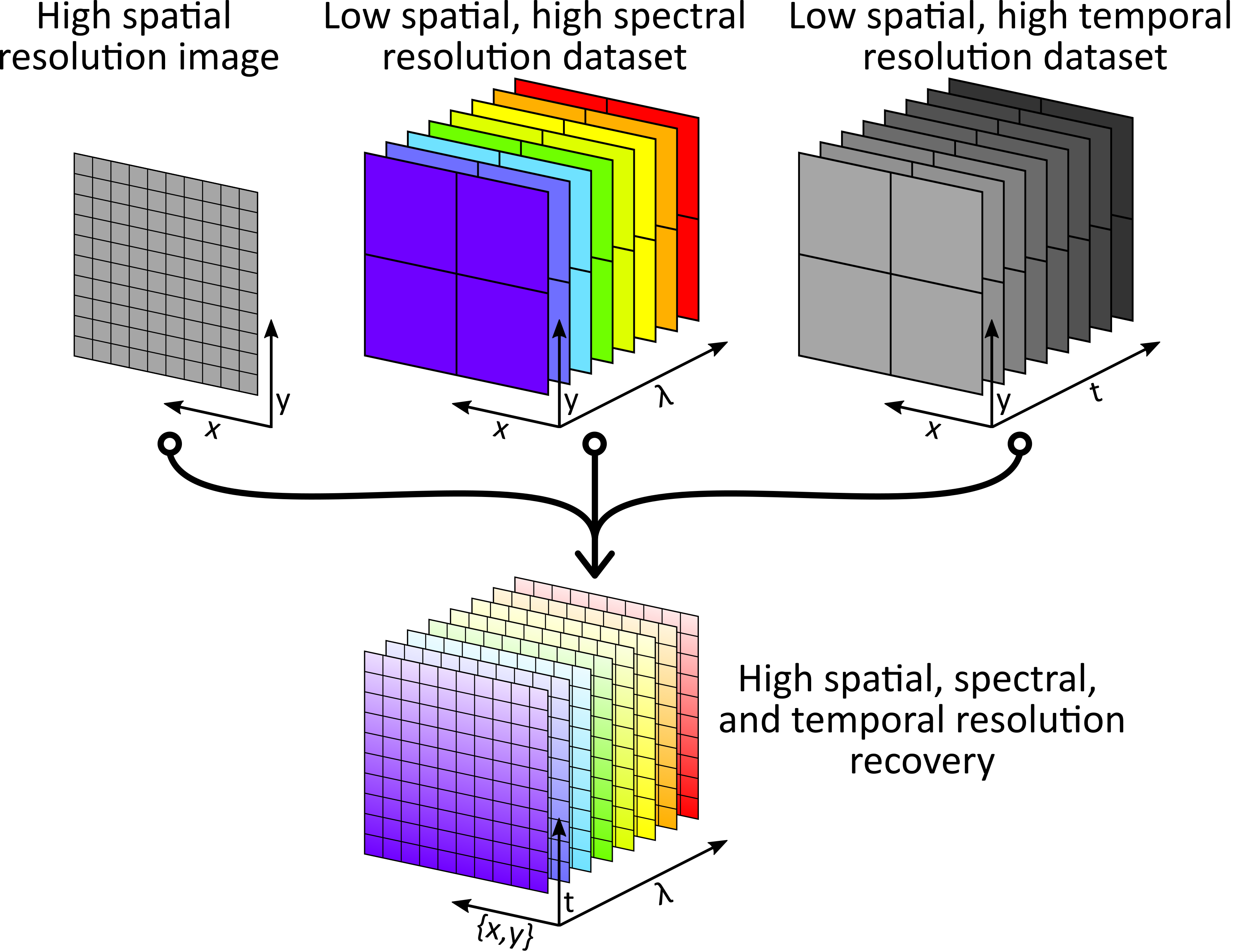

Giga-voxel multidimensional fluorescence imaging combining single-pixel detection and data fusion

Some time ago I wrote a short post about using Data Fusion (DF) to perform some kind of Compressive Sensing (CS). We came with that idea when tackling a common problem in multidimensional imaging systems: the more you want to measure, the harder it gets. It is not only the fact that you need a…